At a certain point, most infrastructure teams run into the same question: how does cloud vs bare metal cost compare once workloads stabilize?

Public cloud changed how companies build software. Spin up servers in minutes. Scale instantly. Pay for what you use.

That model is hard to beat early on. It removes friction and lets teams focus on building instead of managing infrastructure.

But over time, things hopefully change. Workloads stop being experimental. Traffic patterns become predictable. Systems settle into a steady state.

Then the question shifts from “how fast can we scale?” to “why are we paying this much for systems that barely change?”.

Where the Costs Actually Come From

Cloud pricing is built around flexibility.

You are paying for elasticity, rapid provisioning, and managed environments. That tradeoff makes sense when demand is unpredictable. It becomes harder to justify when demand is steady.

Stable workloads often end up paying for features they no longer need. Then there are the costs that are easier to overlook.

Data transfer is a big one. Moving data between services, regions, and users can quietly become one of the largest parts of the bill. It rarely shows up as the headline cost, but it adds up fast as systems scale.

Abstraction is another layer. In public cloud environments, virtualization and tightly coupled managed services make things easier to deploy, but they also limit how much control you have over the underlying infrastructure. To work around that, teams often overprovision resources or spread workloads across multiple services, which increases cost.

Complexity adds pressure in a different way. As environments grow, so do dependencies, configurations, and services. That complexity requires time, tooling, and engineering effort that rarely gets factored into cost.

Then there is unpredictability. Monthly spend moves with usage, which makes forecasting difficult.

None of this is obvious at the beginning. It shows up gradually as systems mature.

How Infrastructure Strategy Evolves

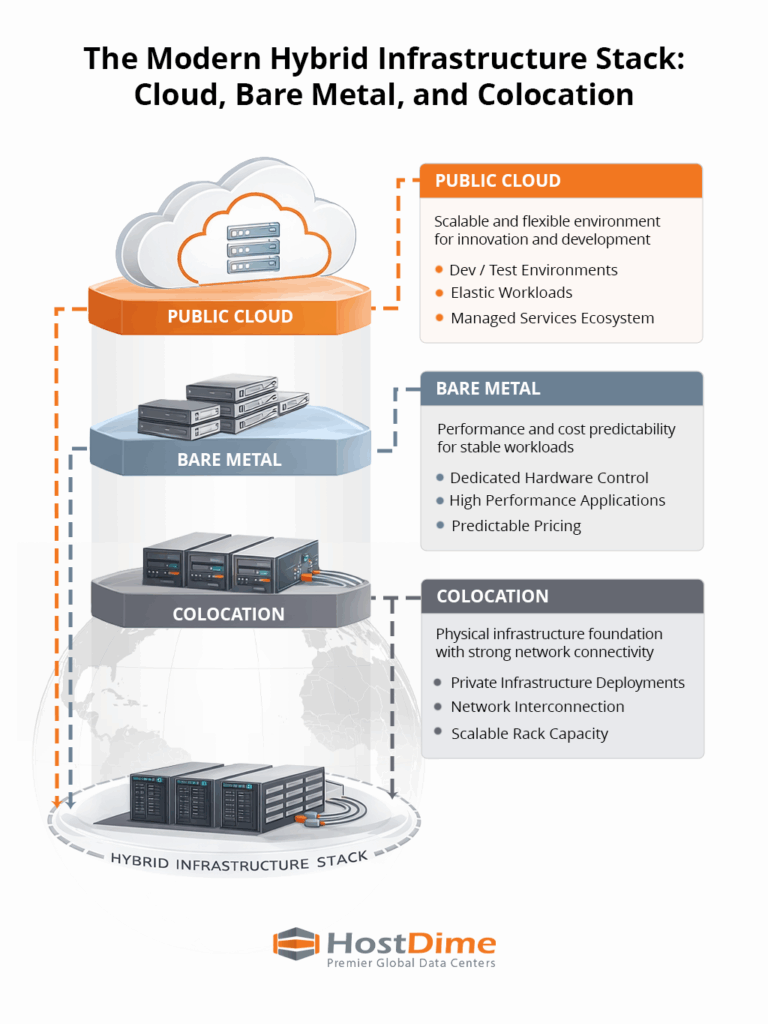

This is where teams start to rethink how infrastructure is structured. Instead of forcing everything into one environment, they begin placing workloads where they make the most sense.

Cloud still plays an important role. It is ideal for development, experimentation, and workloads that genuinely need elasticity. But stable, performance-sensitive systems often move elsewhere.

Bare metal brings control and predictable performance. Colocation provides the environment to scale that infrastructure without taking on the burden of running a facility. Power, cooling, and connectivity are already handled, so teams can focus on operating their systems.

Put together, this creates a more balanced model. Think about it this way:

Cloud for flexibility.

Bare metal for performance.

Colocation for long-term infrastructure scale.

Building a More Efficient Infrastructure Stack

As infrastructure takes up a larger share of spend, placement becomes more important. Hybrid environments reduce waste while keeping flexibility where it is needed.

You need an environment where compute, network, and infrastructure can work together cleanly. HostDime is built around that idea.

With high-density colocation, dedicated bare metal environments, and strong interconnection across multiple regions, HostDime supports the kind of hybrid architectures many platforms are moving toward.

For teams re-evaluating cloud vs bare metal cost, the goal is not to abandon the cloud. It is to stop overpaying for the parts of it that no longer fit.

The advantage comes from aligning each workload with the environment that actually serves it best. And for many growing platforms, that shift starts once the cloud stops working in their favor.